Post summary: How to record a simulation with Gatling recorder and play it back.

Current post is part of Performance testing with Gatling series in which Gatling performance testing tool is explained in details.

Run the recorder

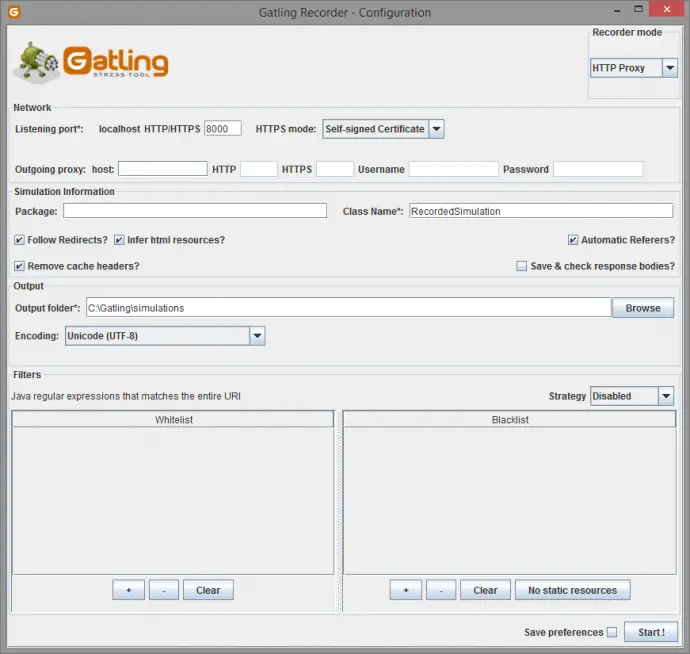

Once Gatling is downloaded recorder can be run with “GATLING_HOME\bin\recorder.bat”. The recorder has two working modes: HTTP Proxy and HAR Converter.

Configure browser proxy

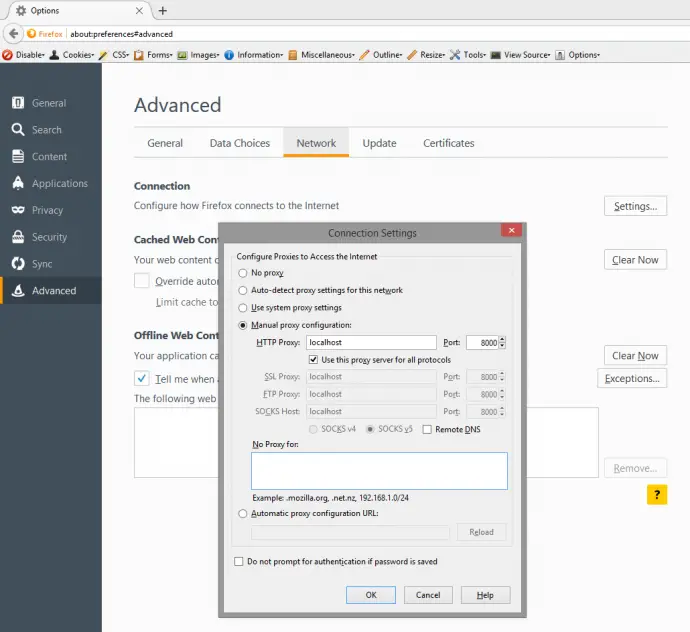

Network traffic should be redirected through Gatling Recorder in order to be captured in HTTP Proxy mode. By default, recorder works on 8000 port, but this is configurable. If a web application is being tested then the browser should be configured to work through the Gatling Recorder proxy. Firefox is configured from Tools -> Options -> Advanced -> Network -> Settings… -> Manual proxy configurations: -> localhost with Port: 8000, Use this proxy server for all protocols.

Microsoft Edge browser has also specific configurations for setting a proxy: Settings -> Network & Internet -> Proxy -> Manual proxy setup.

Configure system proxy

If network traffic that is going to be recorded is not browser-based, e.g. REST or SOAP call, then system-wide proxy should be configured. For Windows this is done through Control Panel -> Internet Options-> Connections -> LAN Settings -> Use a proxy server for your LAN. This configuration forces applications network traffic to go through a configured proxy. The same configuration is done in order to configure a proxy for Chrome, Opera and Internet Explorer browsers.

Use Gatling Recorder’s HTTP Proxy

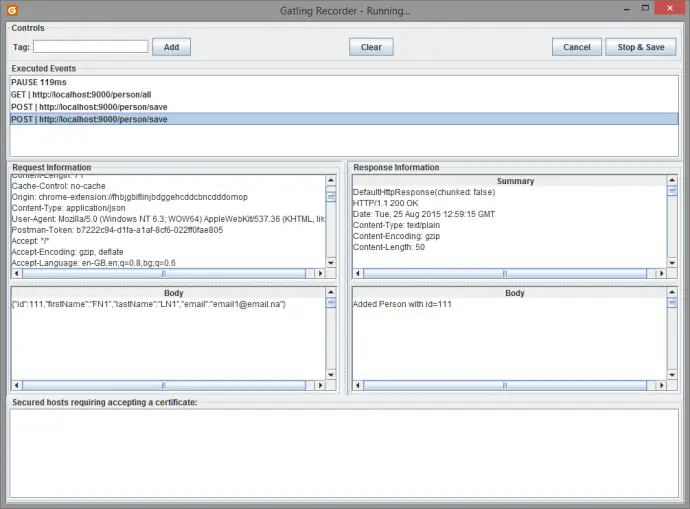

Once a proxy is configured then “Start” button runs the Gatling Recorder proxy and opens a new window that shows all requests being captured. “Stop & Save” button stops the proxy and saves all requests that went through into a Gatling simulation file. The image below is a screenshot of recording of testing RESTful stub server built in Build a RESTful stub server with Dropwizard post which will be used for testing purposes in these tutorial series.

HTTPS and Gatling Recorder

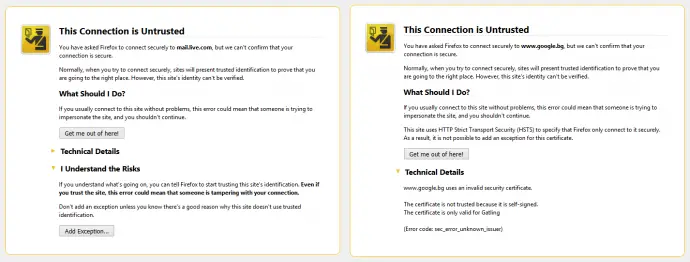

HTTPS is HTTP over SSL or TLS security layer. It is designed to bring security in normal HTTP communication. This security is guaranteed with the server being issues certificate issued by a certification authority. Gatling is intercepting the traffic between server and client (browser) hence is considered intruder into safe HTTPS communications. There are several mechanisms provided by the recorder to handle this. Those are handled in HTTPS modes configuration. The easiest to use is “Self-signed Certificate”. In this mode Gatling is using a certificate which is not issued for web application being tested, hence browsers are recognizing it as invalid. Browsers give warning that certificate is invalid and asks for user’s permission to continue. Beware: in normal daily internet browsing such warning is a sign that something is wrong, your HTTPS connection might be sniffed, so be careful what data you provide in such cases. Some sites are configured in a manner that if the certificate is invalid you are unable to proceed. Here is how Firefox react in both cases. When there is a possibility to continue there is “Add Exception…” button.

Other options to handle certificate problem is “Provided Keystore” and “Certificate Authority” HTTPS mode. For both valid certificate should be issued for the domain under test. More details how to do this can be found Gatling Recorder page.

In case traffic that has to be captured is not browser-based tools that are used to simulate requests should provide support for handling missing valid certificates in case of “Self-signed Certificate”. If you custom code that sends SOAP is being written then Send SOAP request over HTTPS without valid certificates post describes how to work without valid certificates. This can be applied also in case of RESTful HTTPS call.

Use Gatling Recorder’s HAR Converter

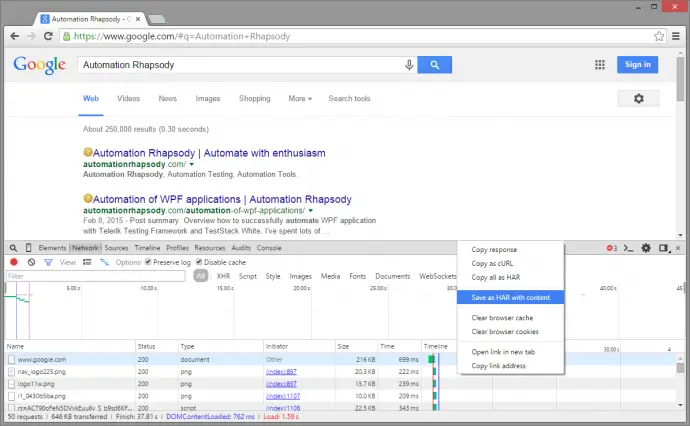

Generating and handling SSL certificates could be a painful process. Before diving into it, another option, that is good to be considered is using Gatling Recorder in HAR Converter mode. In order to do this, all network traffic should be recorded as HTTP Archive – HAR. This can easily be done with Chrome DevTools plugin that is activated with F12 keyboard key. Remember to select “Preserve log” checkbox before starting to record test scenario. Once the scenario is recorded it can be exported with a right mouse click and then “Save as HAR with content”.

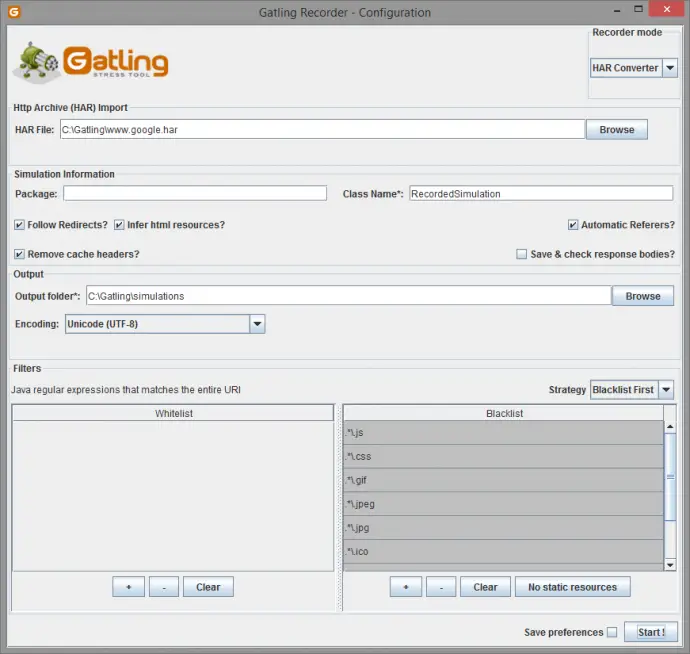

Beware: sensitive data such as passwords are also exported as plain text in HAR archive. Once traffic is being recorded and exported it then gets converted to Gatling simulation by Gatling Recorder. In order to exclude not important requests, e.g. images, CSS, JS, call to other domains there is Blacklist that accepts Java regular expressions. It is possible to use default list by clicking “No static resource” button. There is also Whitelist that can accept only needed requests.

Running recorded scenario

Gatling Recorder saves scenarios in the directory configured in “Output folder*”. By default it uses “GATLING_HOME\user-files\simulations” folder. Simulations are run with “GATLING_HOME\bin\gatling.bat”. Once started is looks default simulations folder and gives a list of all simulations. The user selects simulation by number in the list and then gives a short description for this run. Simulations can be changed before running in order to configure a different number of users as it is 1 by default. If simulations are recorded in different that default folder then runner cannot find them. In such case one option is to move them to “GATLING_HOME\user-files\simulations” or:

Use non-default simulations folder

In order to use differently than default simulation’s folder then this should be configured in “GATLING_HOME\conf\gatling.conf” file. Following configuration elements hierarchy: gatling -> core -> directory -> simulations = “C:\\Gatling\\simulations”.

Conclusion

Gatling recorder is powerful and provides various ways to record a scenario. Either by capturing network traffic in HTTP Proxy mode or by importing already captured network traffic in HAR Converter mode.